AI Compliance

Methodical EU AI Act compliance for German SMEs. I guide you from initial inventory to ongoing monitoring.

Book a first callTwo packages

Compliance that fits your situation

Compliance is not a one-off project. Depending on your starting point, you either need a quick reality check or a full audit. Both packages build on each other and prepare you for ongoing compliance management.

AI Compliance Check

What you get

You know where you stand. An initial inventory of your AI usage, a risk assessment and a concrete priority list. Implementation is still ahead of you.

- 60-minute briefing call to capture the starting situation

- Cataloguing of 2-3 AI systems in the NADOVO platform

- Initial risk classification (mandatory categories per EU AI Act)

- Written compact report on the current compliance situation

- Concrete recommended next steps

- 30 days access to the NADOVO platform

€499

Flat rate

Duration: about 2 weeks

Book a first callAI Compliance Audit

What you get

Everything is in place. Complete documentation, AI policy, staff training. You don't need to build anything yourself, just maintain it going forward.

- Structured shadow-AI discovery via workshop and stakeholder interviews

- Complete inventory of all AI systems in NADOVO

- Complete risk classification per EU AI Act

- Compliance roadmap with prioritised measures

- Mandatory documents under Art. 26 EU AI Act prepared

- Detailed compliance audit report

- 90 days access to the NADOVO platform

- Coaching session for independent compliance maintenance

Individual offer

Investment after initial call

Duration: about 4-6 weeks

Book a first callNeed long-term partnership?

With AI Compliance Partnership I support you individually and scalably with roadmap implementation, regular reviews and training. On request, individually agreed.

Free resource

EU AI Act Guide

The compact guide for German SMEs: obligations, deadlines, classification, and practical implementation steps. Available as a direct PDF download.

Download guide (PDF)

My Framework

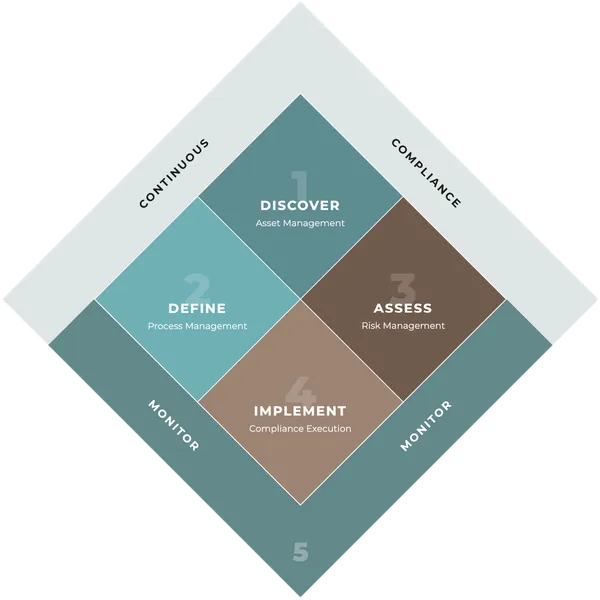

AI Compliance Framework

-

DISCOVER - What do we have? Identify AI assets, determine roles (Provider/Deployer)

-

DEFINE - What are we using it for? Asset + application area = Process → Risk class

-

ASSESS - Which risks? Systematically evaluate HIGH-RISK processes

-

IMPLEMENT - How do we execute? Measures, training, documentation

-

MONITOR - How do we stay compliant? Continuous monitoring, incidents, reassessment

Frequent questions

Answers on EU AI Act compliance

Who is affected by the EU AI Act? +

Almost every company that uses or develops AI systems is in scope of the EU AI Act. The regulation primarily distinguishes two roles: Providers develop AI systems or place them on the market under their own name. Deployers use existing AI systems on their own responsibility for professional purposes. Most SMEs are Deployers, for example when they use Microsoft Copilot, ChatGPT, or industry-specific SaaS tools.

The role is determined per AI system, not per company. A single organisation can simultaneously be a Provider for a system it built in-house and a Deployer for systems it procures. A deeper overview is available in my EU AI Act Guide (PDF).

What are the deployer obligations under Article 26? +

For deployers of high-risk AI systems, Article 26 sets out a concrete catalogue of duties. These include using the system in line with the provider's instructions, ensuring human oversight by qualified personnel, controlling input data, and retaining automatically generated logs for at least six months.

On top of that, deployers must continuously monitor operation, report serious incidents to the provider and the market surveillance authority, and inform affected employees when the system is used in the workplace. Public bodies, banks, and insurers must additionally conduct a fundamental rights impact assessment. Details in the article Deployer-Pflichten im EU AI Act (German only for now).

How do I know if my AI system is classified as high-risk? +

The EU AI Act does not classify tools but applications. What matters is the concrete purpose of use, not the system itself. Article 6 ties the risk class to the intended purpose. The same AI model can be minimal-risk in one context and high-risk in another.

A system qualifies as high-risk when it falls into one of the eight areas listed in Annex III: biometrics, critical infrastructure, education, employment and worker management, access to essential services, law enforcement, migration and asylum, justice and democratic processes. For SMEs, HR applications and credit-worthiness checks are the most relevant. If the system profiles natural persons, it is always high-risk. More details in the article Hochrisiko-KI erkennen (German only for now).

What is the NADOVO framework? +

NADOVO is my 5-phase framework for EU AI Act compliance: DISCOVER (inventory AI assets and determine roles), DEFINE (combine assets with use cases into processes and assign risk classes), ASSESS (systematic risk assessment for high-risk processes), IMPLEMENT (deploy measures, training, and documentation), and MONITOR (continuous monitoring and reassessment).

The key idea is the process layer: it is not the tool that gets classified, but the combination of asset and use case. This way the effort scales with the actual risk instead of treating every tool uniformly. For systematic execution I additionally use NADOVO as a software platform that represents the framework technically, produces audit trails, and documents risk classifications. Overview in the article Der NADOVO Compliance Cycle (German only for now).

When do the EU AI Act rules take effect? +

The EU AI Act takes effect in stages. Since February 2025, the bans on unacceptable AI practices and the duty to ensure AI literacy under Article 4 apply. Since August 2025, the rules for general-purpose AI models have been in force. On 2 August 2026, the full requirements for high-risk systems under Annex III kick in. For most companies, that is the decisive deadline.

The Digital Omnibus proposal from November 2025 could push the high-risk deadline back to December 2027, but it is not yet law. Until the legislative process is finalised, August 2026 remains binding. More on the uncertainty in the article EU AI Act Deadline (German only for now).

Do I really need external consulting for EU AI Act compliance? +

Strictly speaking no, but in most cases it makes sense. The EU AI Act contains 180 recitals, 113 articles, and 13 annexes, with different duties depending on role and risk class. Correctly classifying an AI-driven recruitment tool, conducting a fundamental rights impact assessment for high-risk systems, or judging when a deployer inadvertently becomes a provider requires domain expertise.

What consulting alone does not solve: compliance is not a state but an ongoing process. Article 12 requires ten years of documentation for every change, risk assessment, and measure. Excel sheets and static PDF reports fall short here. What works is a combination of expert judgement and a structured process that operationalises the consulting output. Details in the article KI-Compliance braucht Struktur (German only for now).

Next step

Ready for a first call?

30 minutes, free of charge. Together we clarify whether and how I can help.

Book a first call